The term “big data” literally means a huge amount of information stored on any medium.

- Big Data Sources

- The history of the emergence and development of Big Data

- Techniques and methods for analyzing and processing big data

- Big data development prospects and trends

- Big data in marketing and business

- Examples of using Big Data

- Big Data Issues

- Big data technology market in Russia and worldwide

- The best books on Big Data

Moreover, this volume is so large that it is impractical to process it using the usual software or hardware, and in some cases it is completely impossible.

Big Data is not only the data itself, but also technologies for processing and using them, methods for finding the necessary information in large arrays. The problem of big data is still open and vital for any systems that have been accumulating a wide variety of information for decades.

Big Data Sources

Social networks are an example of a typical source of big data – each profile or public page is one small drop in an unstructured ocean of information. Moreover, regardless of the amount of information stored in a particular profile, interaction with each of the users should be as fast as possible.

Big data is constantly accumulating in almost every area of human life. This includes any industry related to either human interactions or computing. These are social media, and medicine, and the banking sector, as well as device systems that receive numerous results of daily calculations. For example, astronomical observations, meteorological information and information from Earth sounding devices.

Information from various tracking systems in real time is also sent to the servers of a particular company. Television and radio broadcasting, call bases of mobile operators – the interaction of each individual person with them is minimal, but in the aggregate, all this information becomes big data.

Big data technologies have become integral to R&D and commerce. Moreover, they are beginning to capture the sphere of public administration – and everywhere the introduction of more and more efficient systems for storing and manipulating information is required.

The history of the emergence and development of Big Data

The term “big data” first appeared in the press in 2008, when Nature editor Clifford Lynch published an article on how to advance the future of science with the help of big data technologies. Until 2009, this term was considered only from the point of view of scientific analysis, but after the release of several more articles, the press began to widely use the concept of Big Data – and continues to use it at the present time.

In 2010, the first attempts to solve the growing problem of big data began to appear. Software products were released, the action of which was aimed at minimizing the risks when using huge information arrays.

By 2011, large companies such as Microsoft, Oracle, EMC and IBM became interested in big data – they were the first to use Big data in their development strategies, and quite successfully.

Universities began to study big data as a separate subject already in 2013 – now not only data sciences, but also engineering, together with computing subjects, are dealing with problems in this area.

Techniques and methods for analyzing and processing big data

The main methods of data analysis and processing include the following:

Class methods or data mining

These methods are quite numerous, but they are united by one thing: the mathematical tools used in conjunction with achievements in the field of information technology.

Crowdsourcing

This technique allows you to obtain data simultaneously from several sources, and the number of the latter is practically unlimited.

A/B testing

From the entire amount of data, a control set of elements is selected, which is compared in turn with other similar sets, where one of the elements has been changed. Conducting such tests helps to determine which parameter fluctuations have the greatest effect on the control population. Thanks to the volumes of Big Data, it is possible to carry out a huge number of iterations, with each of them approaching the most reliable result.

Predictive Analytics

Specialists in this field try to predict and plan in advance how the controlled object will behave in order to make the most advantageous decision in this situation.

Machine learning (artificial intelligence)

It is based on an empirical analysis of information and the subsequent construction of self-learning algorithms for systems.

Network analysis

The most common method for the study of social networks – after receiving statistical data, the nodes created in the grid are analyzed, that is, the interactions between individual users and their communities.

Big data development prospects and trends

In 2017, when big data is no longer something new and unknown, its importance has not only not decreased, but even increased. Now experts are betting that the analysis of large amounts of data will become available not only for giant organizations, but also for small and medium-sized businesses. This approach is planned to be implemented using the following components:

Cloud Storage

Data storage and processing are becoming faster and more economical – compared to the costs of maintaining your own data center and the possible expansion of staff, renting a cloud seems to be a much cheaper alternative.

Using Dark Data

The so-called “dark data” is all non-digitized information about a company that does not play a key role in its direct use, but may serve as a reason for switching to a new information storage format.

Artificial Intelligence and Deep Learning

Machine intelligence learning technology, which mimics the structure and operation of the human brain, is the best suited for processing a large amount of constantly changing information. In this case, the machine will do everything that a person would have to do, but the probability of error is greatly reduced.

Blockchain

This technology allows you to speed up and simplify numerous Internet transactions, including international ones. Another advantage of Blockchain is that it reduces transaction costs.

Self-service and price cuts

In 2017, it is planned to introduce “self-service platforms” – these are free platforms where representatives of small and medium-sized businesses will be able to independently evaluate the data they store and systematize it.

Big data in marketing and business

All marketing strategies are somehow based on the manipulation of information and the analysis of existing data. That is why the use of big data can predict and make it possible to adjust the further development of the company.

For example, an RTB auction created on the basis of big data allows you to use advertising more efficiently – a certain product will be shown only to the group of users who are interested in purchasing it.

What is the benefit of using big data technologies in marketing and business?

- With their help, you can create new projects much faster, which are likely to become popular among buyers.

- They help to relate customer requirements to an existing or planned service and thus adjust them.

- Big data methods allow you to assess the degree of current satisfaction of all users and each one individually.

- Increasing customer loyalty is achieved through big data processing methods.

- Attracting the target audience on the Internet is becoming easier due to the ability to control huge amounts of data.

For example, one of the most popular services for predicting the likely popularity of a particular product is Google.trends. It is widely used by marketers and analysts, allowing them to get statistics on the use of a given product in the past and forecast for the next season. This allows company leaders to more effectively distribute the advertising budget, determine in which area it is best to invest money.

Examples of using Big Data

The active introduction of Big Data technologies to the market and into modern life began just after they began to be used by world-famous companies that have customers in almost every corner of the globe.

These are such social giants as Facebook and Google, IBM., As well as financial structures like Master Card, VISA and Bank of America.

For example, IBM is applying big data techniques to cash transactions. With their help, 15% more fraudulent transactions were detected, which increased the amount of protected funds by 60%. The problems with false positives of the system were also solved – their number was reduced by more than half.

VISA similarly used Big Data, tracking fraudulent attempts to perform a particular transaction. Thanks to this, they annually save more than 2 billion US dollars from leakage.

The German Ministry of Labor has managed to cut costs by 10 billion euros by implementing a big data system in the work of issuing unemployment benefits. At the same time, it was revealed that a fifth of citizens receive these benefits without justification.

Big Data has not bypassed the gaming industry either. Thus, the developers of World of Tanks conducted a study of information about all players and compared the available indicators of their activity. This helped to predict possible future churn of players – based on the assumptions made, representatives of the organization were able to interact with users more effectively.

Notable organizations using big data also include HSBC, Nasdaq, Coca-Cola, Starbucks, and AT&T.

Big Data Issues

The biggest problem with big data is the cost of processing it. This can include both expensive equipment and the cost of wages for qualified specialists capable of servicing huge amounts of information. Obviously, the equipment will have to be regularly updated so that it does not lose its minimum performance as the amount of data increases.

The second problem is again related to the large amount of information that needs to be processed. If, for example, a study gives not 2-3, but a large number of results, it is very difficult to remain objective and select from the general data stream only those that will have a real impact on the state of a phenomenon.

Big Data privacy issue. With most customer service services moving to online data usage, it is very easy to become the next target for cybercriminals. Even simply storing personal information without making any online transactions can be fraught with undesirable consequences for cloud storage customers.

The problem of information loss. Precautions require not to be limited to a simple one-time backup of data, but to make at least 2-3 backup copies of the storage. However, as the volume increases, the complexity of redundancy increases – and IT specialists are trying to find the best solution to this problem.

Big data technology market in Russia and worldwide

As of 2014, 40% of the big data market is services. Slightly inferior (38%) to this indicator is the revenue from the use of Big Data in computer equipment. The remaining 22% is in software.

The most useful products in the global segment for solving Big Data problems, according to statistics, are In-memory and NoSQL analytical platforms. 15 and 12 percent of the market, respectively, are occupied by Log-file analytical software and Columnar platforms. But Hadoop / MapReduce in practice cope with the problems of big data is not very effective.

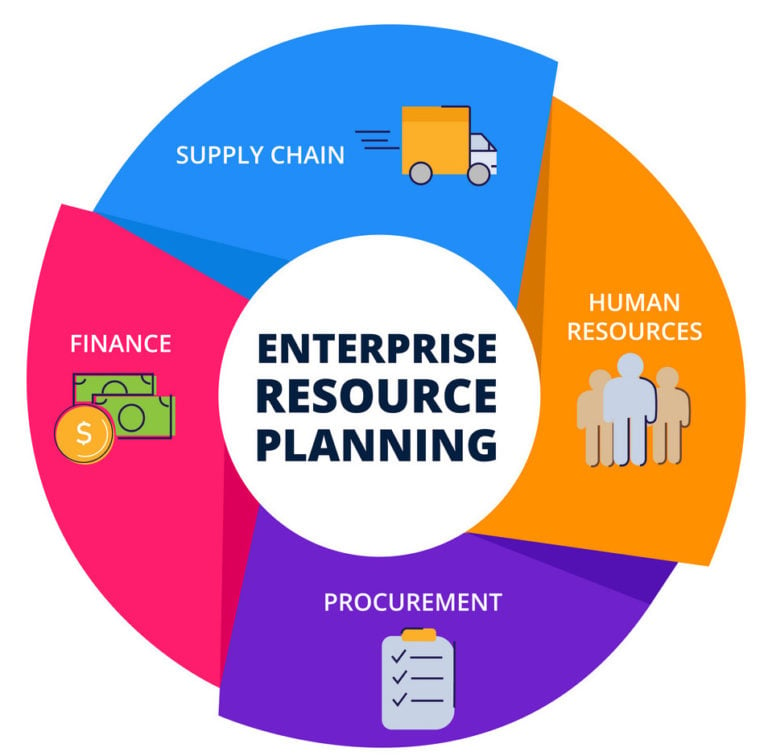

Results of implementing big data technologies:

- growth in the quality of customer service;

- optimizing supply chain integration;

- organization planning optimization;

- acceleration of interaction with customers;

- improving the efficiency of processing customer requests;

- reducing service costs;

- optimizing the processing of client requests.

The best books on Big Data

“The Human Face of Big Data” by Rick Smolan and Jennifer Erwitt

Suitable for the initial study of big data processing technologies – it easily and clearly brings you up to date. It makes it clear how the abundance of information has affected everyday life and all its areas: science, business, medicine, etc. Contains numerous illustrations, so it is perceived without much effort.

Introduction to Data Mining by Pang-Ning Tan, Michael Steinbach and Vipin Kumar

Also a useful book for beginners on Big Data, which explains how to work with big data in a “from simple to complex” manner. It covers many important points at the initial stage: preparation for processing, visualization, OLAP, as well as some methods of analyzing and classifying data.

Python Machine Learning by Sebastian Raska

A practical guide to using and working with big data using the Python programming language. Suitable for both engineering students and professionals who want to deepen their knowledge.

“Hadoop for Dummies”, Dirk Derus, Paul S. Zikopoulos, Roman B. Melnik

Hadoop is a project designed specifically to work with distributed programs that organize the execution of actions on thousands of nodes at the same time. Acquaintance with it will help to understand in more detail the practical application of big data.